OxNLP talk abstracts

Talk abstracts

24 May 2024

How to Know What Language Models Know

Dr Jennifer Hu, Harvard University

Abstract: Evaluation has long been essential to progress in AI and NLP. But as language models (LMs) become more sophisticated, their performance on benchmarks is increasingly being interpreted as evidence for reasoning, commonsense, or even intelligence itself. As such, one of the most important questions for our field is: how can we know what language models know? In this talk, I will first describe a framework for interpreting the outcomes of LM evaluations, inspired by the concept of linking hypotheses in cognitive science. I will then illustrate how different linking hypotheses can lead to vastly different conclusions about LMs' competence. In particular, prompt-based evaluations (e.g., "Is the following sentence grammatical? [sentence]") yield systematically lower performance than direct measurements of token probabilities. These results underscore the importance of specifying the assumptions behind our evaluation design choices before we draw conclusions about LMs' capabilities.

Bio: Jennifer Hu is a Research Fellow at the Kempner Institute for the Study of Natural and Artificial Intelligence at Harvard University, and an incoming Assistant Professor of Cognitive Science at Johns Hopkins University (starting July 2025). She earned a PhD from MIT in the Department of Brain and Cognitive Sciences, where she studied how language models can inform theories of human linguistic knowledge. Her research combines computational and experimental approaches to investigate how language works in minds and machines.

17 May 2024

Predicting and Controlling Mechanisms Learned by Large Language Models

Jack Merullo, Brown University

Abstract: Neural networks such as large language models (LLMs) have long been criticized for their inscrutability. As they become larger, more capable, and increasingly prevalent in everyday life, understanding how they produce the outputs that they do is of growing importance to ensure that they can be used safely and responsibly. In this talk, I will discuss work on understanding the computational patterns which Transformer LLMs learn to solve various tasks using tools from mechanistic interpretability. I will show that understanding patterns like the linear representation of features and reuse of circuits distributed across layers allows us to control model behaviors with fine-grained precision. Last, I will show how we can interpret simple mechanisms solely by analyzing model weights, opening the door to predicting LLMs' behavior before we run them.

10 May 2024

CAMEL: Communicative Agents for “Mind” Exploration of Large Language Model Society

Guohao Li, University of Oxford

Abstract: The rapid advancement of chat-based language models has led to remarkable progress in complex task-solving. However, their success heavily relies on human input to guide the conversation, which can be challenging and time-consuming. This paper explores the potential of building scalable techniques to facilitate autonomous cooperation among communicative agents, and provides insight into their "cognitive" processes. To address the challenges of achieving autonomous cooperation, we propose a novel communicative agent framework named role-playing. Our approach involves using inception prompting to guide chat agents toward task completion while maintaining consistency with human intentions. We showcase how role-playing can be used to generate conversational data for studying the behaviors and capabilities of a society of agents, providing a valuable resource for investigating conversational language models. In particular, we conduct comprehensive studies on instruction-following cooperation in multi-agent settings.

Bio: Guohao Li is a postdoc researcher at Oxford and an open-source contributor working on building intelligent agents that can perceive, learn, communicate, reason, and act. He is the core lead of the open source projects CAMEL-AI.org and DeepGCNs.org and a core member of PyG.org.

Guohao Li obtained his PhD degree in Computer Science at King Abdullah University of Science and Technology (KAUST) advised by Prof. Bernard Ghanem. During his Ph.D. studies, he worked at Intel ISL as a research intern. He visited ETHz CVL as a visiting researcher. He also worked at Kumo AI as the first intern. His primary research interests include Autonomous Agents, Graph Machine Learning, Computer Vision, and Embodied AI. He has published related papers in top-tier conferences and journals such as ICCV, CVPR, ICML, NeurIPS, RSS, 3DV, and TPAMI.

3 May 2024

Student Flash Talks

Anna George

Title: Investigating the Impact of White Supremacist Terrorist Manifestos on Migration Conversations

Abstract:

Radicalization can occur when individuals regularly engage with harmful online content. As individuals become radicalized, they develop increasingly hostile views towards out-group members (Berger, 2018). Individuals who perceive the “threat” of out-group members as reaching a climax may commit violent attacks, as has been the case in several white supremacist terrorist attacks in the last decade (Berger, 2018). The manifestos of such attackers often serve as examples of how damaging harmful online conversations can be in the physical world. However, there has been little research conducted on the possible bi-directional impact where the manifestos/terrorist attacks affect online discourse as well. Therefore, in this research we ask: Do white supremacist terrorists’ manifestos influence online conversations about migration? To study this question, we calculated the semantic similarity between Reddit posts and seven white supremacist terrorist manifestos. The aim was to understand the degree to which conversations about migration on Reddit mirrored the language found in these manifestos. In this presentation I will present early results from this research, and discuss future directions for this work.

Daniel Gottlich

Title: Protestantism and the Roots of Modern Science

Short abstract: A large historical literature has pointed to aspects of Protestantism as a potenial cause for the Scientific Revolution (ca 1550-1700). In my dissertation, I will reevaluate this hypothesis empirically by constructing a city-year panel of scientific publications from the publishing locations of 1.5 million historical books (editions) 1450-1700. Since Early Modern book titles were typically long and descriptive, this also amounts to a fairly large multilingual body of text (ca 35 million words) which I intend to use to develop a proxy for the quality/innovativeness of a publication. My preliminary analyses – which not yet be interpreted causally – confirm a significant increase in scientific production in protestant vis-a-vis catholic regions. Statistically, this can be entirely explained with institutional and dynastic changes but more work is necessary to rule out alternative hypotheses.

Jabez Magomere

Title: Evaluating the Robustness of Claim Matching Embedding-based Methods to Misinformation Edits

Abstract:

Claim matching is a task that helps fact checkers retrieve fact checks or other verified claims relevant to a misinformation claim. Current claim matching methods rely on embedding models to generate dense vector representations that are used to match an input claim to a database of previous fact checks. However, as online users interact with and share misinformation, they introduce various edits to misinformation claims. These edits make it challenging for current claim matching systems to retrieve fact checks for the edited misinformation claims. We investigate whether current embedding models are robust to misinformation edits observed on social media, such as rewriting a claim in a different dialect, negating a claim, amplifying a claim, introducing typos, replacing named entities, and paraphrasing. We propose a method to generate misinformation edits by introducing minimal perturbations to an input claim while preserving the grammaticality, syntactic structure, and semantic meaning of the generated claims. We demonstrate that current embedding models are brittle to misinformation edits, resulting in widely varying representations of an input claim and its edited versions. This observation leads to decreased performance on two downstream tasks: retrieving relevant fact checks and classifying matched claims. To mitigate this issue, we propose a method that generates a synthetic dataset containing various misinformation edits and applies contrastive learning to improve an embedding model's robustness to such edits.

Lea Krause

Title: What does Culture Mean in NLP? Exploring the Lack of Cultural Diversity in Multilingual Datasets for Conversational AI

Cultural diversity in NLP goes beyond recognising different languages, requiring a deep understanding of cultural contexts. Our research focuses on multilingual datasets used in question-answering and dialogue systems, examining how these datasets consider cultural dimensions, if at all, and how culture is defined in their development. The goal is to identify gaps in cultural representation and propose methods for more accurately reflecting the broad spectrum of cultures in conversational AI, thus promoting greater inclusivity and accuracy in AI interactions.

26 April 2024

Oiwi Parker Jones, Neural Processing Lab, Department of Engineering Science

Leoākiko: Automatic Speech Recognition in Hawaiian

Despite efforts within the Hawaiian speaking community to annotate audio recordings of the language, each hour of transcribed audio takes about 30 hours of manual labour. In this talk, I will review recent work within the community to accelerate these efforts through the development of high-quality Hawaiian Automatic Speech Recognition (ASR) – known in Hawaiian as leoākiko (literally "speech-to-text", cf. kikoāleo "text-to-speech"). Specifically, I will touch on the use of foundation models; independent text corpora, language models, and rescoring; crowdsourcing; the benefits and limitations of word error rates; and, finally, plans for the future.

8 March 2024

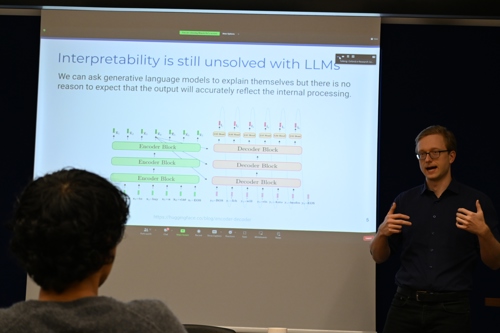

Panelists include Fazl Barez, Emanuele La Malfa

Panel discussion, on the theme of Benchmarking Large Language Models

This week we will have a special session for panel discussion, with the theme of Benchmarking Large Language Models. As Large Language Models are increasingly widely deployed in various areas, we are concerned with their capabilities and limitations. Benchmarking them is vital for understanding, but also poses a fundamental challenge given the black box nature of many models and their rapid capability improvements. Here, we want to raise the question to researchers with rich experience in this area and hear about their views.

We will be having Fazl Barez, Emanuele La Malfa, and other panelists to be confirmed later this week. We look forward to interacting with you and hope the discussion will be both interesting and insightful.

1 March 2024

Dr Guy Emerson, University of Cambridge

Truth conditions at scale, and beyond

Abstract: Truth-conditional semantics has been successful in explaining how the meaning of a sentence can be decomposed into the meanings of its parts, and how this allows people to understand new sentences. In this talk, I will first show how a truth-conditional model can be learnt in practice on large-scale datasets of various kinds (textual, visual, ontological), and how this provides empirical benefits compared to non-truth-conditional (vector-based) computational semantic models.

I will then take a step back to discuss the computational challenges of such an approach. I will argue it is (unfortunately) computationally intractable to reduce all kinds of language understanding to truth conditions, and so the truth-conditional account must be incomplete. To enable a more complete account, I will present a radically new approach to approximate Bayesian modelling, which rejects the computationally intractable ideal of using a single probabilistically coherent model. Instead, multiple component models are trained to mutually approximate each other. In particular, this provides a rigorous method for complementing a truth-conditional model with models for other kinds of language understanding. This new approach gives us a new set of tools for explaining how patterns of language use arise as a result of resource-bounded minds interacting with a computationally demanding world.

Links:

https://aclanthology.org/2023.emnlp-main.542/

https://aclanthology.org/2022.acl-long.275/

https://aclanthology.org/2023.starsem-1.37/

23 February 2024

Lionel Wong (PhD Student, MIT Brain and Cognitive Sciences)

From Word Models to World Models: Translating from Natural Language to the Probabilistic Language of Thought

https://arxiv.org/abs/2306.12672

Abstract: We think about the language that we hear. We can spend minutes or hours figuring out how to answer a question. We make plans on the basis of instructions or commands (or innocent queries about our long term goals), which might encompass dozens of fine-grained motor actions or abstract plans stretching years ahead. We imagine events in the past, the future, and wholly fictional universes on the basis of words. How do we derive meaning from language -- and how does the meaning that we get out of language go on to inform so much of our downstream thought, from planning and mental simulation to physical and downstream reasoning?

In this talk, we introduce rational meaning construction, a computational framework that models language-informed thinking by integrating learned, distributional language models with structured probabilistic languages for world modeling and inference. We frame linguistic meaning as a context-sensitive mapping from natural language into a probabilistic language of thought (PLoT)--a general-purpose symbolic substrate for generative world modeling. In this talk, I’ll discuss how this framework can be concretely implemented to model how language can update our beliefs and drive downstream inferences; relate language to other core domains of cognition; and even model how we might construct new concepts and theories from what we hear in language.

16 February 2024

Daniella Ye, Hunar Batra

Highlights of NeurIPS 2023

Papers:

- Linguistic Binding in Diffusion Models: Enhancing Attribute Correspondence through Attention Map Alignment [https://arxiv.org/abs/2306.08877]

- An Emulator for Fine-Tuning Large Language Models using Small Language Models [https://arxiv.org/abs/2310.12962]

- Fine-Grained Human Feedback Gives Better Rewards for Language Model Training [https://openreview.net/forum?id=CSbGXyCswu&ref=ruder.io]

- Direct Preference Optimization: Your Language Model is Secretly a Reward Model [https://arxiv.org/abs/2305.18290]

- Language Models Don’t Always Say What They Think: Unfaithful Explanations in Chain-of-Thought Prompting [https://arxiv.org/abs/2305.04388]

- Are Emergent Abilities of Large Language Models a Mirage? [https://arxiv.org/abs/2304.15004]

Hunar Batra: Hunar is a DPhil Computer Science student at the University of Oxford working on Large Vision Language Models. Her research explores aligning these models towards improved transparency of Chain-of-Thought reasoning and Visual Reasoning by augmenting reasoning architectures that interlink the models' true reasoning processes mechanistically and behaviourally to give better insights into their actions and interpreting the world model learnt by them. She is a Visiting Researcher at NYU Alignment Research group and Research Consultant at Anthropic working on mitigating biased and ignored reasoning in LLMs. In the past, she worked on aligning language models and exploring multiversal generational dynamics of LLMs as a Stanford Existential Risks Initiatives ML Scholar, and trained language models to predict SARS-CoV-2 mutations for her MSc CS dissertation at Oxford.

Daniella Ye: Daniella Ye (Zihuiwen Ye) is a DPhil Computer Science student at the University of Oxford. Her research focuses on exploring alternative ways for language generation such as employing diffusion models to improve long range text cohesion via non-autoregressive planning. In the past, she worked on improving text-to-code generation with self-play. She is currently doing an internship at Cohere.

9 February 2024

Fangru Lin, Emanuele La Malfa

Paper 1: Graph-enhanced Large Language Models in Asynchronous Plan Reasoning

http://arxiv.org/abs/2402.02805

Speaker: Fangru Lin

Abstract: Reasoning about asynchronous plans is challenging since it requires sequential and parallel planning to optimize time costs. Can large language models (LLMs) succeed at this task? Here, we present the first large-scale study investigating this question. We find that a representative set of closed and open-source LLMs, including GPT-4 and LLaMA-2, behave poorly when not supplied with illustrations about the task-solving process in our benchmark AsyncHow. We propose a novel technique called Plan Like a Graph (PLaG) that combines graphs with natural language prompts and achieves state-of-the-art results. We show that although PLaG can boost model performance, LLMs still suffer from drastic degradation when task complexity increases, highlighting the limits of utilizing LLMs for simulating digital devices. We see our study as an exciting step towards using LLMs as efficient autonomous agents.

Bio: Fangru is a DPhil Linguistics student at the University of Oxford. Her research focuses on using linguistically informed methods to assess and improve state-of-the-art LLMs’ performance in real-world tasks such as planning and using LLMs as autonomous agents. She is especially interested in the application of neuro-symbolic methods to general artificial intelligence. Industrially, she previously interned as a software engineer at Microsoft. For more information, please see Fangru's page.

Paper 2: Code Simulation Challenges for Large Language Models

https://arxiv.org/abs/2401.09074

Speaker: Emanuele La Malfa

Abstract: We investigate the extent to which Large Language Models (LLMs) can simulate the execution of computer code and algorithms. We begin by looking at straight line programs, and show that current LLMs demonstrate poor performance even with such simple programs -- performance rapidly degrades with the length of code.

We then investigate the ability of LLMs to simulate programs that contain critical paths and redundant instructions. We also go beyond straight line program simulation with sorting algorithms and nested loops, and we show the computational complexity of a routine directly affects the ability of an LLM to simulate its execution.

We observe that LLMs execute instructions sequentially and with a low error margin only for short programs or standard procedures.

LLMs' code simulation is in tension with their pattern recognition and memorisation capabilities: on tasks where memorisation is detrimental, we propose a novel prompting method to simulate code execution line by line. Empirically, our new Chain of Simulation (CoSm) method improves on the standard Chain of Thought prompting approach by avoiding the pitfalls of memorisation.

Bio: Emanuele is a postdoctoral researcher at the University of Oxford: he currently works on benchmarking Large Language Models funded by the Alan Turing Institute. He completed his PhD at Oxford on robustness as a property for Natural Language Processing.

2 February 2024

Isabelle Lorge, Myeongjun Jang

Title: Highlights of EMNLP 2023

Isabelle and Myeongjun both attended EMNLP 2023 (6-10 December in Singapore.) as the first authors papers. On 2 Feb, they will give flash presentations of some other papers at the meeting that they thought were especially significant and interesting for the OxNLP group. The papers are:

- Fast and Accurate Factual Inconsistency Detection Over Long Documents

- Let’s Sample Step by Step: Adaptive-Consistency for Efficient Reasoning and Coding with LLMs

- Conceptual structure coheres in human cognition but not in large language models

- When the Majority is Wrong: Modeling Annotator Disagreement for Subjective Tasks

- MemeCap: A Dataset for Captioning and Interpreting Memes

- Dissecting Recall of Factual Associations in Auto-Regressive Language Models

- Towards Interpretable Mental Health Analysis with Large Language Models

- CLIMB – Curriculum Learning for Infant-inspired Model Building

- Emergent Linear Representations in World Models of Self-Supervised Sequence Models

- Rigorously Assessing Natural Language Explanations of Neurons

- Assessing Step-by-Step Reasoning against Lexical Negation: A Case Study on Syllogism

26 January 2024

Paul Röttger

Postdoctoral Researcher

MilaNLP Lab at Bocconi University

Title: Helpful or Harmful? Evaluating the Safety of Large Language Models

Without proper safeguards, large language models will readily follow malicious instructions and generate toxic content. This risk motivates safety efforts such as red-teaming and large-scale feedback learning, which aim to make models both helpful and harmless. However, there is a tension between these two objectives, since harmlessness sometimes requires models to refuse to comply with unsafe prompts, and thus not be helpful. In my talk, I will engage with this tension in two ways. First, I will present our recent work on XSTest, a test suite for identifying exaggerated safety behaviours in large language models, where models refuse to comply with safe prompts if they superficially resemble unsafe prompts. Second, I will speak on general challenges in evaluating (the safety of) large language models, summarising recent negative perspectives, but also providing a more positive outlook.

19 January 2024

Hannah Kirk

DPhil student

University of Oxford

Title: Who decides how LLMs behave? Re-thinking the role of pluralistic human preferences in steering LLMs

Human feedback is a powerful tool to steer large language models (LLMs) to write and act like “us". Learning from human feedback has been the impetus for dramatic changes in the volume, variety and customisability of human-LLM interactions in the past year. This talk digs deeper into which humans are in the driving seat of LLM behaviour, and examines the current limitations in human feedback collection, particularly in terms of representation and conceptual clarity. While we mostly all agree that “alignment” is needed, no one can quite agree upon what it means in practice. To understand how different humans interpret what it means for an LLM to be aligned, I introduce the GRIFFIN Alignment Project: a large-scale dataset aimed at inclusive LLM alignment through granular, representative, and individualised feedback. I conclude by addressing the challenges of greater personalisation, fragmentation and customisation in the LLMs of today and the agentic AI of tomorrow, advocating for robust governance and dynamic communication strategies.

1 December 2023

Anej Svete

PhD Student, ETH Zurich

Title: Understanding Language Models with Formal Language Theory: Recurrent Neural Language Models as Recognizers of (Probabilistic) Formal Languages

Language models (LMs) are currently at the forefront of NLP research due to their remarkable versatility across diverse tasks. Technologists have begun to speculate about such capabilities; among these speculations are claims that large LMs are general-purpose reasoners or even that they could be a basis for general AI. However, a large gap exists between their observed capabilities and our theoretical understanding of those. Luckily, in the context of computer science, the notion of reasoning is concretely defined—it refers to the ability to execute algorithms. Hence, if we study LMs in terms of well-understood formalisms characterizing the complexity of algorithms that can be solved, we can more precisely describe LMs’ abilities and limitations.

With this motivation in mind, a large field of work has investigated the representational capacity of recurrent neural network (RNN) LMs in terms of their capacity to recognize formal languages—a well-established notion of the algorithmic capability of a formal model. In this talk, I will outline some classical results describing the representational capacity of RNNs. I will then connect this to the concrete task of language modeling, since LMs do not only describe unweighted formal languages. Rather, they define probability distributions over strings. We, therefore, pay special attention to the recognition of weighted formal languages and discuss what classes of probability distributions RNN LMs can represent. I will describe how simple RNN LMs with the Heaviside activation are equivalent to deterministic probabilistic finite-state automata, while ReLU-activated simple RNN LMs can model non-deterministic probabilistic finite-state automata and can, given unbounded computation time, even express any computable probability distribution.

24 November 2023

Scott Hale

Title: Whose LLM? Representation, bias, and applications to misinformation

Large-language models (LLMs) and generative AI could revolutionize computational social science, but their use also raises fundamental questions of representation and bias. Downstream users of LLMs need stronger evaluations in order to understand the languages, domains, and tasks within an LLM’s training and those which fall outside. This talk will present an overview of multiple studies. The first applies LLMs to identify misinformation narratives. The second demonstrates how current approaches to Reinforcement Learning from Human Feedback (RLHF) fail to address harmful, stereotypical outputs in non-Western contexts. Finally, the talk will present an in-progress experiment that aims to better understand what different people want from LLMs and how they perceive generative AI output.

17 November 2023

Harsha Nori

Microsoft

Title: Guidance — constrained sampling with formal grammars for controlling language models

Getting Language Models (LMs) to behave exactly the way we want them to can be challenging. Guidance is a popular open source library (14k+ stars) that combines the best of natural language prompting and code to help users structure prompts and express constraints. Guidance interfaces with many popular LM providers — both local, like HuggingFace and llama.cpp, and remote, like OpenAI — and provides a rich developer experience for constraining the output of LMs.

This talk will be a sneak preview of a major update to the guidance library. We’ll go over the basics of the guidance language, and — on the research side — discuss how guidance efficiently translates user specified constraints into formal grammars, which then make low level alterations to an LM sampling process. We’ll also discuss token healing — how subtle but important issues arise at prompt boundaries when translating from text to token space, and how guidance automatically heals these issues for users. We’ll end with a forward looking discussion on the future of constrained sampling.

10 November 2023

Ved Mathai

DPhil student, Oxford e-Research Centre, University of Oxford

Title: Sparks of Artificial General Intelligence: Early experiments with GPT-4

(https://arxiv.org/pdf/2303.12712.pdf)

Abstract: While GPT-4 has awed both academia and the world beyond with its seemingly human-like capabilities, this report by Microsoft makes early steps towards trying to grasp its true capabilities. The operative word here is 'sparks'. While GPT-4 may seem intelligent, its intelligence only appears in fleeting sparks. Existing benchmarks are not designed to measure creativity and there is always a chance that GPT-4 had ingested existing benchmarks to become good at them. The authors try to qualitatively judge its power with semi-systematic qualitative judgements, while asking in the abstract if we need a better definition of 'general intelligence' anyway. Since many in the reading group are interested in benchmarking the power of GPT-4, this report promises to provide the different aspects of 'intelligence' we should be testing when designing benchmarks for the different new-age large-language models.

3 November 2023

Marek Rei

Senior Lecturer of Machine Learning, Imperial College, London

Marek Rai

Marek Rai

Title: Interpretable architectures and guided attention for neural language models

Neural models of natural language have achieved remarkable results, but their interpretability remains an open issue. While they are able to output accurate predictions, it is unclear how they reached their decision and whether it was for the right reasons.

In this work, we investigate neural architectures for representing language that are inherently interpretable - they are able to point to relevant evidence in the input by themselves. This is achieved by careful use of attention, having the model dynamically make decisions about which input areas are important to consider. Furthermore, we can directly supervise this attention, teaching the model to make decisions based on the same evidence as humans.

27 October 2023

Aleksandar Petrov

DPhil student, Autonomous Intelligent Machines and Systems CDT, University of Oxford

Title: When do Prompting and Prefix-Tuning Work? A Theory of Capabilities and Limitations

Context-based fine-tuning methods like prompting, in-context learning, soft

prompting (prompt tuning) and prefix-tuning have gained popularity as they of-

ten match the performance of full fine-tuning with a fraction of the parameters.

Despite their empirical successes, there is little theoretical understanding of how

these techniques influence the internal computation of the model and their expres-

siveness limitations.

We show that despite the continuous embedding space being

much more expressive than the discrete token space, soft-prompting and prefix-

tuning are strictly less expressive than full fine-tuning. Concretely, context-based

fine-tuning cannot change the relative attention pattern over the content and can

only bias the outputs of an attention layer in a fixed direction. While this means that fine-tuning techniques such as prompting, in-context learning, soft prompting and prefix-tuning can successfully elicit or combine skills already present in the pretrained model, they cannot learn tasks requiring new attention patterns.

20 October 2023

Janet Pierrehumbert

Professor of Language Modelling, Deptartment of Engineering Science, University Oxford

Title: Mismatches between human language processing and NLP

NLP algorithms have achieved high performance on many tasks. However, this is often at the expense of exorbitant training. Even with such training, they fall short on certain tasks that humans perform easily and reliably. This talk will give an overview of some well-established core properties of human language processing. It will summarize the extent to which SOTA transformer models and generative models incorporate, or fail to incorporate, these properties.

16 June 2023

Jingwei Ni. from ETH & UZH

Title: When Does Aggregating Multiple Skills with Multi-Task Learning Work? A Case Study in Financial NLP

Abstract: Multi-task learning (MTL) aims at achieving a better model by leveraging data and knowledge from multiple tasks. However, MTL does not always work – sometimes negative transfer occurs between tasks, especially when aggregating loosely related skills, leaving it an open question when MTL works. Previous studies show that MTL performance can be improved by algorithmic tricks. However, what tasks and skills should be included is less well explored. In this work, we conduct a case study in Financial NLP where multiple datasets exist for skills relevant to the domain, such as numeric reasoning and sentiment analysis. Due to the task difficulty and data scarcity in the Financial NLP domain, we explore when aggregating such diverse skills from multiple datasets with MTL can work. Our findings suggest that the key to MTL success lies in skill diversity, relatedness between tasks, and choice of aggregation size and shared capacity. Specifically, MTL works well when tasks are diverse but related, and when the size of the task aggregation and the shared capacity of the model are balanced to avoid overwhelming certain tasks.

26 May 2023

Pushpak Bhattacharyya

Bhagat Singh Rekhi Chair Professor of Computer Science and Engineering at IIT Bombay

Title: Natural Language Processing and Mental Health

Abstract: As per WHO, the number of mental health patients all over the world is about 1000 million, and about 14% of deaths in the world are due to mental disorders. The ratio of doctors to patients in the case of mental health support is about 1:10000. This situation underlines the need for automation in mental health monitoring. In this talk, we present our ongoing work on using natural language processing (NLP) and machine learning (ML) for detecting mental conditions and also generate positive and reassuring statements that can pull a person from the brink of taking extreme steps. The data sets, classification, and text generation framework will be described pointing to rich possibilities of future work.

Bio: Prof Pushpak Bhattacharyya is Bhagat Singh Rekhi Chair Professor of Computer Science and Engineering at IIT Bombay. He has done extensive research in Natural Language Processing and Machine Learning. Some of his noteworthy contributions are Indian Language NLP like IndoWordnet, Cognitive NLP, Low Resource MT, and Knowledge Graph-Deep Learning Synergy in Information Extraction and Question Answering. He served as President of the ACL (Association of Computational Linguistics) in 2016.

19 May 2023

William Wang

Associate Professor of Computer Science

University of California, Santa Barbara

Title: On bias, trustworthiness, and safety of language models

Bio: William Wang is the Co-Director of UC Santa Barbara's Natural Language Processing group and Center for Responsible Machine Learning. He is the Duncan and Suzanne Mellichamp Chair in Artificial Intelligence and Designs, and an Associate Professor in the Department of Computer Science at the University of California, Santa Barbara. He has published more than 100 papers at leading NLP/AI/ML conferences and journals, and received best paper awards (or nominations) at ASRU 2013, CIKM 2013, EMNLP 2015, and CVPR 2019, a DARPA Young Faculty Award (Class of 2018), an IEEE AI's 10 to Watch Award (Class of 2020), and many more. He frequently serves as an Area Chair or Senior Area Chair for NAACL, ACL, EMNLP, and AAAI. He is an elected member of IEEE Speech and Language Processing Technical Committee (2021-2023) and a member of ACM Future of Computing Academy.

12 May 2023

Edoardo Ponti

Lecturer in Natural Language Processing

University of Edinburgh

Title: Modular Deep Learning

Abstract: "Transfer learning has recently become the dominant paradigm of machine learning. Pre-trained models fine-tuned for downstream tasks achieve better performance with fewer labelled examples. Nonetheless, it remains unclear how to develop models that specialise towards multiple tasks without incurring negative interference and that generalise systematically to non-identically distributed tasks. Modular deep learning has emerged as a promising solution to these challenges. In this framework, units of computation are often implemented as autonomous parameter-efficient modules. Information is conditionally routed to a subset of modules and subsequently aggregated.

These properties enable positive transfer and systematic generalisation by separating computation from routing and updating modules locally. In this talk, I will introduce a general framework for modular neural architectures, providing a unified view over several threads of research that evolved independently in the scientific literature. In addition, I will provide concrete examples of their applications: 1) cross-lingual transfer by recombining task-specific and language-specific task sub-networks; 2) knowledge-grounded text generation by Fisher-weighted addition of modules promoting positive behaviours (e.g. abstractiveness) or negation of modules promoting negative behaviours (e.g. hallucinations); 3) generalisation to new NLP and RL tasks by jointly learning to route information to a subset of modules and to specialise them towards specific skills (common sub-problems reoccurring across different tasks).