Control Group Education

Distributed Control of Crazyflie Quadcopters

Quadcopters and Unmanned Aerial Vehicles (UAV) are becoming increasingly more commonplace; they are used for filming, food delivery, surveillance, etc. The CrazyFlie quadcopter is designed to be flown indoors, weighs just 27 grams and fits in the palm of a hand. It provides an excellent platform for research and allows to test algorithms in practice, indoors. With the firmware of the drone readily available online and maintained by BitCraze, it can be easily reconfigured and reprogrammed before up-loading it onto the CrazyFlie microprocessor.

Each member in the current feet of CrazyFlie nano-drones has a low-level cascade PID controller onboard, in the firmware. This controller, which can be easily readjusted or completely rewritten makes sure the drone tracks the velocity, position and angular inputs. These can be programmed to be sent automatically on the firmware or from a computer. The communication happens via the CrazyFlie specific CRTP protocol through the CrazyRadio 2.4GHz USB dongle. For the localisation of the copters we use an onboard approach, specifically the optical flow based sensor, called FlowDeck. By taking a number of images of the floor underneath, the FlowDeck uses a Kalman Filter to estimate the drone's position relative to its starting point. The onboard approach is a necessity as we strive towards the complete independence of the drones from any external sensors or actuators, e.g. motion capture cameras. The current research is into the full decentralization of the control of a fleet of drones. The fleet shoul be able to track position and velocity setpoints while also avoiding collisions and matching velocities achieving a full flock behaviour. The minimum necessity to achieve these is to define a specific topological graph of the network of drones, where the neighbours can communicate with each other.

The complete distribution of the control of the fleet has a number of computational and practical advantages, but also comes at a cost of complexity and communication links requirements. Overcoming these challenges creates a robust distributed framework with a plethora of opportunities. With the current setup in place, we can extend the capabilities of the fleet, such as tracking a non-stationary ground objects, surveillance of ground area, automatic wireless charging or collective task completion to name just a few.

Racing Car Platform

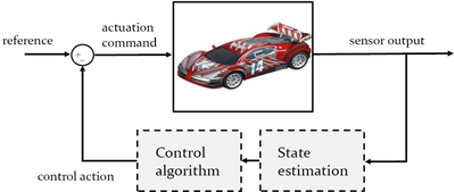

We have developed a test-bed for various control algorithms related to low level control of vehicles, as well as trajectory optimization and collision avoidance. Emphasis is given on real-time implementation, while position and velocity information are provided via a camera based system.

Different control algorithms to automatically regulate the position and velocity of the cars have been developed. Current implementation is based on real-time Model Predictive Control (MPC), where a finite horizon optimization program is solved online, determining the optimal sequence of steering command. The first parts of this sequence are implemented, and the horizon is then rolled and the process is repeated. This introduces feedback in the cars driving strategy while allowing trading different objectives and meeting hard constraints like the track boundaries.

The general control architecture underpinning the operation of each car is based on the following sequence of steps:

-

The camera based vision system captures the cars on the track. To this end, each of them characterised by a unique marker pattern.

-

The position and velocity of each car is estimated by means of some state estimation algorithm, and is broadcasted to the computer used for control calculation.

-

The control inputs (e.g., speed commands) are sent via Bluetooth to the embedded board microcontroller of each car, which then drives around the track.

The developed platform is currently used for a series of student projects, as well as outreach events.